For a long time, I thought collecting large amounts of Reddit data required Python scripts, APIs, and a pretty solid technical background. I was wrong. In this article, I’ll share how I managed to scrape and organize Reddit posts and comments for research and content ideas using simple, no-code tools—especially RedScraper—without writing a single line of code.

Why I Wanted Reddit Data in the First Place

My initial goal was to understand what people were really talking about in my niche. I wanted to:

- Find common questions and problems in specific subreddits

- Discover content ideas based on real conversations

- Track sentiment around a few brands and products

- Monitor trends over time rather than relying on one-off posts

I quickly realized that manually copying and pasting posts into a spreadsheet was not sustainable. After a few hours of that, I went looking for a no-code Reddit scraper that would let me automate the process.

The Challenges I Faced Before Using No-Code Tools

Before I discovered dedicated Reddit scraping tools, my attempts looked like this:

- Manual collection: Opening individual threads, copying titles, upvotes, comments, and pasting them into a spreadsheet.

- Browser extensions: Trying generic web scraping extensions that worked okay on some sites but struggled with Reddit’s dynamic content and nested comments.

- DIY API idea: I briefly considered using the Reddit API, but that meant learning how to authenticate, handle requests, and parse JSON. Not exactly no-code.

The big issue was consistency. Anything that wasn’t automated meant I either collected very little data or burned a full day copying posts instead of analyzing them.

Discovering RedScraper as a No-Code Reddit Scraper

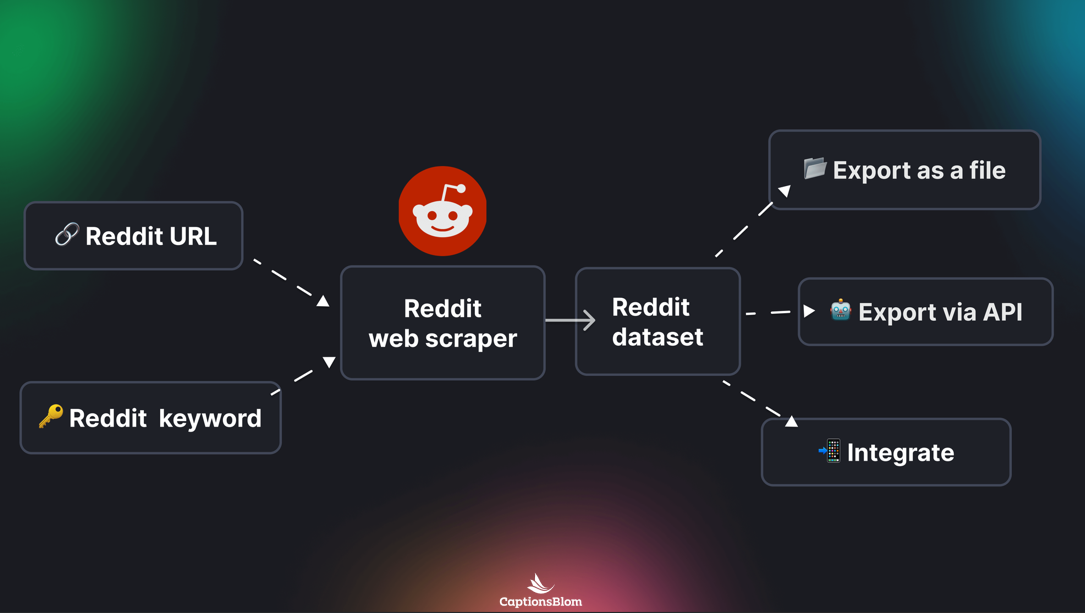

After digging around for tools that could handle Reddit specifically, I came across RedScraper. What caught my attention right away was that it focused on Reddit as its primary platform instead of being a generic web scraper. That meant it understood subreddits, posts, comments, and user data out of the box.

For someone who cannot (and honestly doesn’t want to) write code, RedScraper felt like a dedicated no-code Reddit scraper that turned what would normally be a developer-only workflow into a series of clicks and form fields.

Setting Up My First Reddit Scraping Project

My first project with RedScraper was focused on a handful of subreddits in my industry. I wanted data on:

- Post titles and URLs

- Upvote counts and comment counts

- Post timestamps

- Top-level comments for qualitative insights

The setup process looked roughly like this:

- Selecting the data source: I entered the names of the subreddits I cared about and chose the type of content I wanted (e.g., new posts, top posts, or hot posts).

- Setting filters: I limited results to posts with a minimum number of upvotes so that I didn’t drown in low-engagement content.

- Choosing fields: Instead of writing code to select attributes, I just ticked checkboxes for title, author, upvotes, comments, date, and so on.

- Defining how far back to go: I specified a date range so I could focus on relatively recent posts without scraping years of history.

Within minutes, I had a clear configuration, and all of it was done through a visual interface. No scripts, no API keys, no console windows.

Automating the Data Collection Process

One of the most valuable realizations I had was that scraping Reddit data should be a recurring process, not a one-time export. RedScraper acted less like a single-purpose tool and more like one of those flexible Reddit automation services that handle ongoing data flows.

I scheduled my projects to run automatically. For example, I set a daily scrape of my main subreddits so I could watch new posts appear in my spreadsheets without lifting a finger. The automation side felt like this:

- Pick how often the scraper runs (daily, weekly, etc.).

- Set how many posts to fetch each time.

- Automatically export or sync data to a CSV or Google Sheets file.

Instead of logging into Reddit and checking every subreddit manually, I could open my spreadsheet and see the latest content, complete with metrics and timestamps.

Working With the Exported Reddit Data

Once RedScraper delivered the data, the fun part began: analysis. I used simple tools like spreadsheets and basic filters instead of complex programming.

Some concrete things I was able to do:

- Content idea mining: Sorting by number of comments and upvotes helped me find discussion topics that clearly resonated with people.

- Identifying recurring questions: Filtering by keyword showed me which problems and questions kept appearing across posts.

- Simple sentiment checks: Even without advanced NLP tools, skimming the top comments on popular posts revealed what users loved or hated about certain products.

- Trend spotting: By adding a column for the date and grouping posts by week or month, I could see when certain topics started gaining traction.

The key realization was that high-quality, structured data from Reddit is incredibly useful for research, marketing, and product decisions—even if you only use basic spreadsheet skills.

How RedScraper Compared to Other Options

Before settling on RedScraper, I tried and evaluated a few other approaches:

- Generic scraping tools: Many web scrapers are designed to work on any site, but Reddit’s layout, dynamic content, and nested structure made those tools fairly fragile. One design change on Reddit could break the configuration.

- Manual API wrappers: Some services or scripts exposed Reddit data through custom dashboards, but most of them still required some degree of technical setup or were limited in what they returned.

- Paid data providers: A few companies sell ready-made Reddit datasets, but they often don’t match your exact niche or date range, and you have less control over what’s collected.

RedScraper stood out because it was tuned specifically for Reddit. It felt closer to a specialized Reddit automation service, but with more control and transparency, and without demanding any programming knowledge.

What I Learned About No-Code Reddit Scraping

This whole experience changed how I think about data collection and automation. A few takeaways stood out:

- No-code doesn’t mean limited: I assumed that because I wasn’t coding, I would be stuck with a shallow set of options. In reality, being able to configure filters, fields, and schedules visually was both powerful and more intuitive.

- Reddit is a goldmine of user insight: Compared to traditional surveys or keyword tools, Reddit gave me raw, unfiltered opinions and conversations, which were often more honest and detailed.

- Automation beats one-off scrapes: A single snapshot is helpful, but automating recurring scrapes showed me how topics evolved over time and when new trends started emerging.

- Data is only useful if you can work with it: Simple exports to CSV or Google Sheets were enough for me to get value. I didn’t need advanced analytics platforms to start making better decisions.

If you are intimidated by the idea of writing scripts but still want structured Reddit data, a dedicated no-code Reddit scraper like RedScraper can be more than enough to power your research and content strategy.

Practical Tips If You Want to Try This Yourself

Based on my own trial and error, here are a few practical tips for anyone considering Reddit scraping without coding:

- Start with a focused goal: Decide whether you want content ideas, competitor insights, product feedback, or trend tracking. This will determine which subreddits, filters, and time ranges you choose.

- Be selective with subreddits: A few high-signal communities are better than a huge list of barely relevant ones. Quality beats quantity when it comes to discussions.

- Use filters to reduce noise: Limit posts by minimum upvotes or comment counts so that your spreadsheet isn’t filled with throwaway content.

- Schedule small, frequent scrapes: Instead of pulling a massive dataset once, run smaller automatic scrapes regularly. This makes it easier to review results and spot changes.

- Respect Reddit’s terms and community norms: Stick to ethical data practices, don’t spam, and remember that behind every username is a real person sharing their opinions.

Final Thoughts

Using RedScraper to collect Reddit data without coding opened doors I assumed were closed to non-developers. What started as an experiment to gather a few posts for research turned into a reliable, automated flow of insights that I now depend on for content planning and decision-making.

If you have been hesitating to explore Reddit as a data source because you don’t code, tools like RedScraper and other Reddit automation services are worth trying. With a bit of initial setup and a clear goal, you can turn Reddit’s massive pool of conversations into structured, actionable information—no scripts required.